Introduction

Better open and click-through rates result in more website visitors and sales, and every marketer wants that.

But how do you do it?

One way is to start running A/B tests on your email campaigns. In this guide, we’ll show you what A/B testing is and how it can improve your open and click-through rates, as well as arm you with a number of ideas for A/B tests you can run on your email campaigns to get better results.

What is A/B testing and why should marketers care?

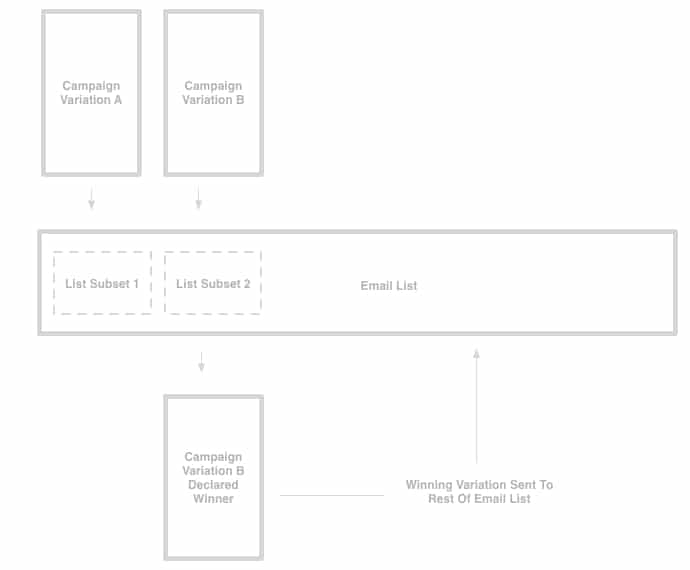

A/B testing, in the context of email, is the process of sending one variation of your campaign to a subset of your subscribers and a different variation to another subset of subscribers, with the ultimate goal of working out which variation of the campaign garners the best results.

A/B testing can vary in complexity, and simple A/B tests can include sending multiple subject lines to test which one generates more opens, while more advanced A/B testing could include testing completely different email templates against each other to see which one generates more click-throughs.

If you’re using email tools like Campaign Monitor, A/B testing your campaigns is very easy, as you can use the email builder to create 2 different variations of your email and it will automatically send it to 2 different subsets of your list to see which variation performs best.

Once the test has concluded and the winning version has been found, it’ll automatically send the winning version to the rest of your list.

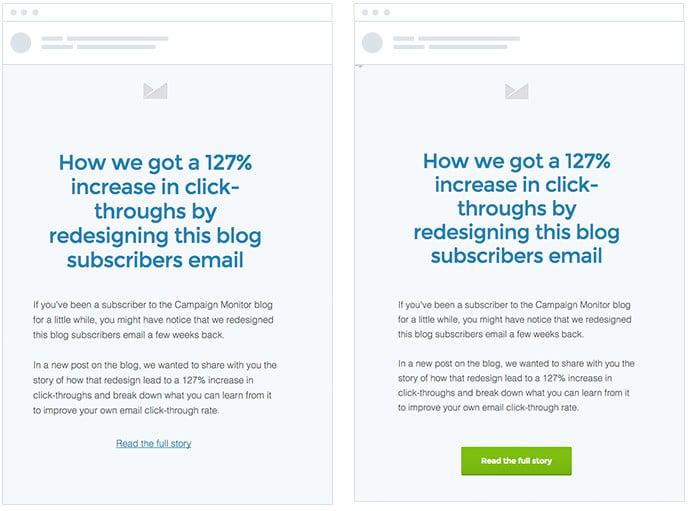

A/B testing your campaigns is a great way to increase the open and click-through rates of your emails. For example, here at Campaign Monitor, we’ve tested everything from our subject lines to the copy on our call-to-action buttons. We’ve even tested different templates against each other to see which one works best, and were able to get a 127% increase in click-throughs as a result.

2 A/B testing stats you should consider

For those who just consider empirical data, here are 3 A/B testing stats to help you see the importance of A/B testing your emails.

1. Brands split on split testing

A whopping 39% of brands don’t test their broadcast or segmented emails.

This has a lot more to do with you and your email marketing strategy than you may think.

This is important because it indicates that you have an edge over the brands that don’t test their emails. By failing to A/B test, their campaigns are not running at their optimum potential.

By A/B testing your emails, you can ensure that your emails are performing at their best.

2. Small changes make big differences

When it comes to A/B testing your emails, one thing you need to know is that small changes can make big differences.

For example, when Hubspot decided to test the effects of using a personalized sender name as opposed to a generic company name, they got some pretty interesting results. The version of the email that had a person’s name as the sender had a 0.53% higher open rate and a 0.23% higher click-through rate. While these may seem like insignificant figures, this small improvement resulted in 131 leads being gained.

While no 2 emails and email marketing campaigns are the same, it is safe to say that a better version of your email will bring some significant changes to your engagement rates and revenue, and the only way to know that better version is through split testing.

What should I test?

Using email marketing tools, you can test almost any aspect of your email marketing campaigns to improve the results. Here are a few ideas to help get you started:

Subject lines

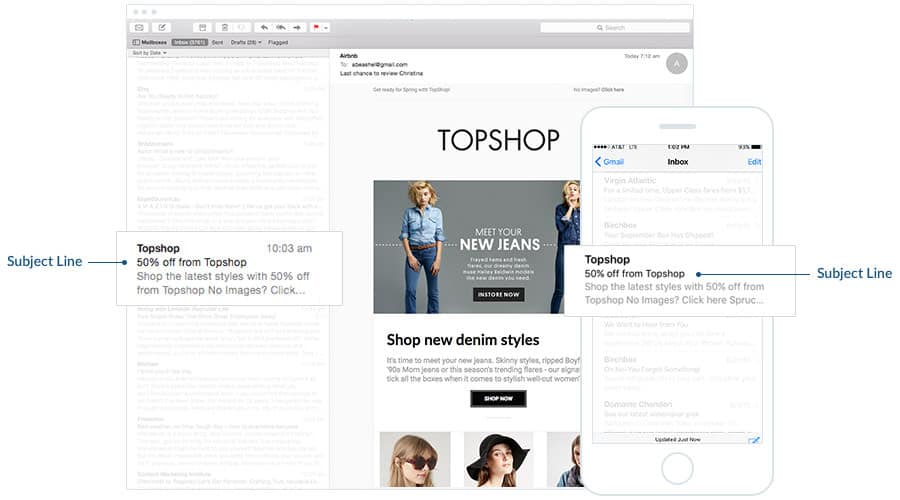

The subject line is one of the most prominent elements of your campaign when viewed in the inbox. On most devices, the subject line is formatted with darker, heavier text to help make it stand out among the other details of the email.

Given its prominence in the inbox and its effect on open rates, it should be an area of focus for your A/B testing.

So what kinds of tests can you run in your subject lines to try to drive increased opens? Here are a few ideas:

Length

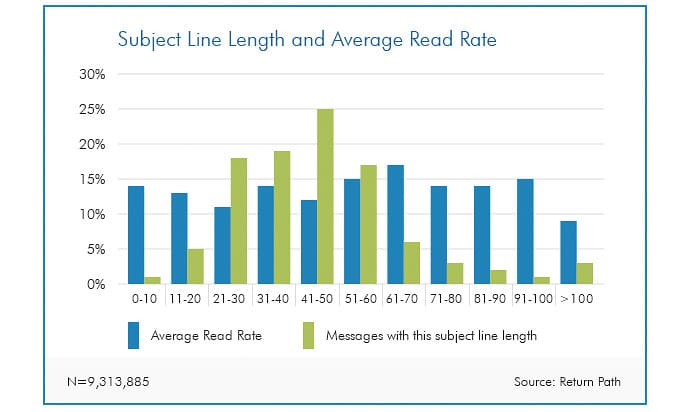

The ideal length of email subject lines is a hotly debated topic in email marketing, and a recent study from Return Path shows that the optimal length is around 61-70 characters.

However, your subscribers are unique and may react differently than those included in this study. Perhaps they are reading emails on mobile devices more often or are using older email clients, which show fewer characters in the subject line field.

Consider setting up your next campaign as an A/B test to see what subject line length works best for your audience.

Word order

The order in which you place the words in your email subject line can make a difference in how people read and interpret them and can potentially impact your email open rate.

Consider these two example subject lines for the same email:

- Use this discount code to get 25% off your next purchase

- Get 25% off your next purchase using this discount code

In the second variation, the benefit of opening the email (getting 25% off the next purchase) is placed at the beginning of the subject line. Given that English-speaking subscribers read left to right, this places the emphasis on the benefit readers will get from opening the email and can potentially increase open rates.

Next time you’re writing the subject line for your email campaign, consider testing the order of the words to see if front-loading the benefit can help improve your open rates.

Content

If your email contains multiple pieces of content (like a newsletter, for instance), then testing different pieces of content as the subject line can be a great way to improve your email open rates and learn what kind of content resonates with your audience.

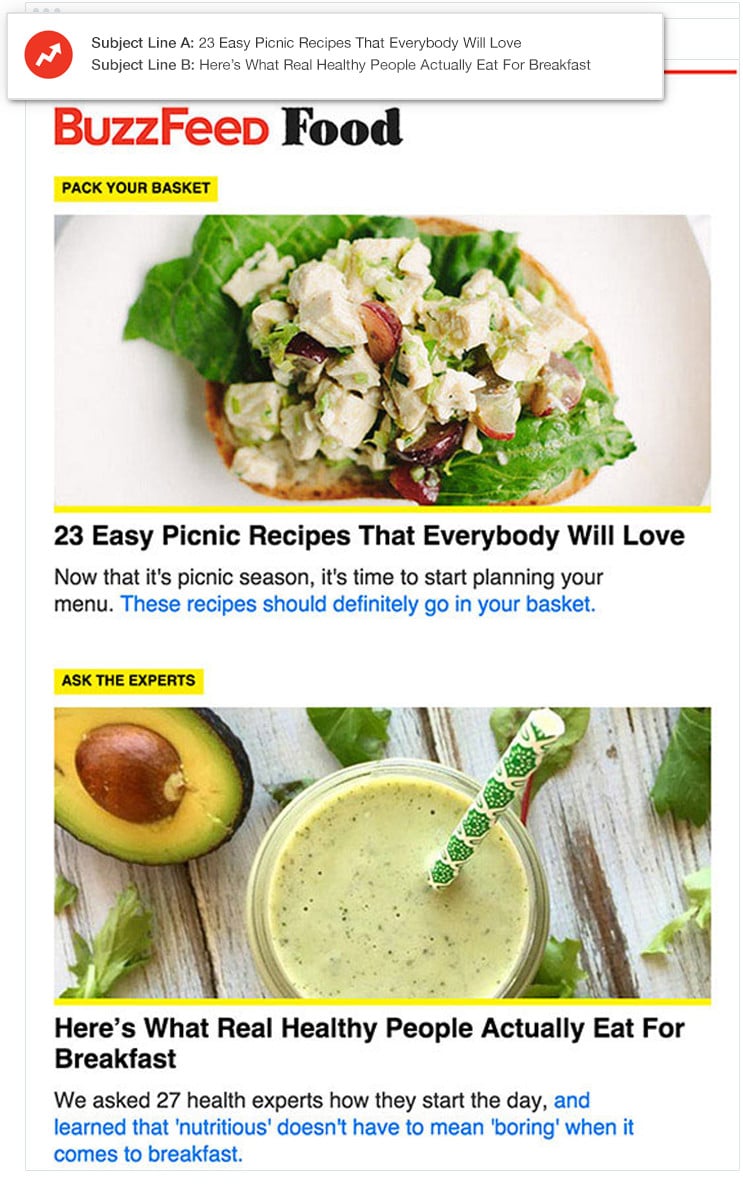

BuzzFeed does an excellent job of this in their newsletters.

Each newsletter BuzzFeed sends contains multiple pieces of content, and they A/B test featuring different content pieces in the subject line to see which drives the most opens.

Next time you’re writing a subject line for your email newsletter, consider testing different pieces of content in the subject line to increase the number of opens your campaign receives.

Personalization

According to our own study on Power Words in Email Subject Lines, the subscriber’s name is the single most impactful word you can add to your subject line, increasing opens by over 14%.

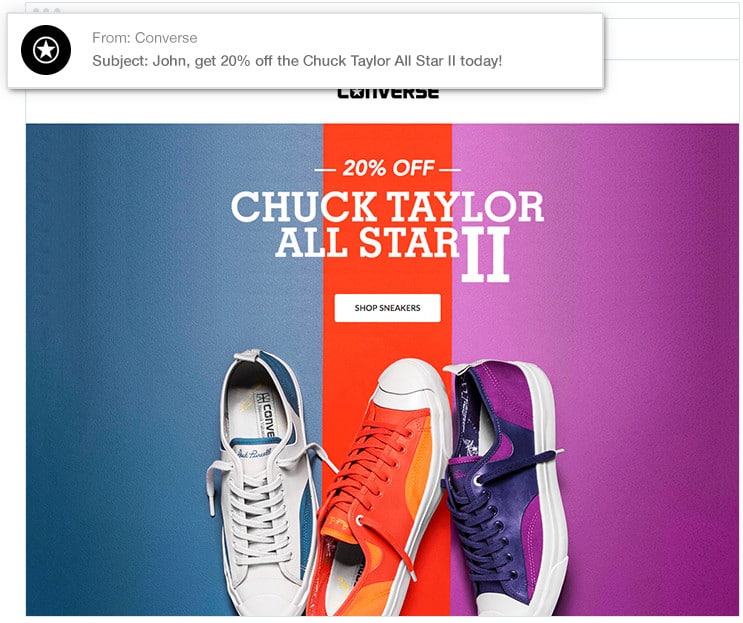

Converse uses the subscriber’s name to great effect in their email campaigns.

By using Personalization Tags to dynamically insert a subscriber’s name into the subject line field, they help their campaign stand out in the inbox and drive increased opens, click-throughs, and sales for their brand.

Find out if this same strategy would work for your own brand and subscriber base by A/B testing it on one of your upcoming campaigns. If you have your subscriber’s name stored in your email list, consider adding it to the subject line of your next campaign to see if it drives increased opens and clicks for your business.

Visuals

Research shows that the human brain processes visuals 60,000 times faster than text, which means using images in your email campaigns can be a powerful way to get your message across.

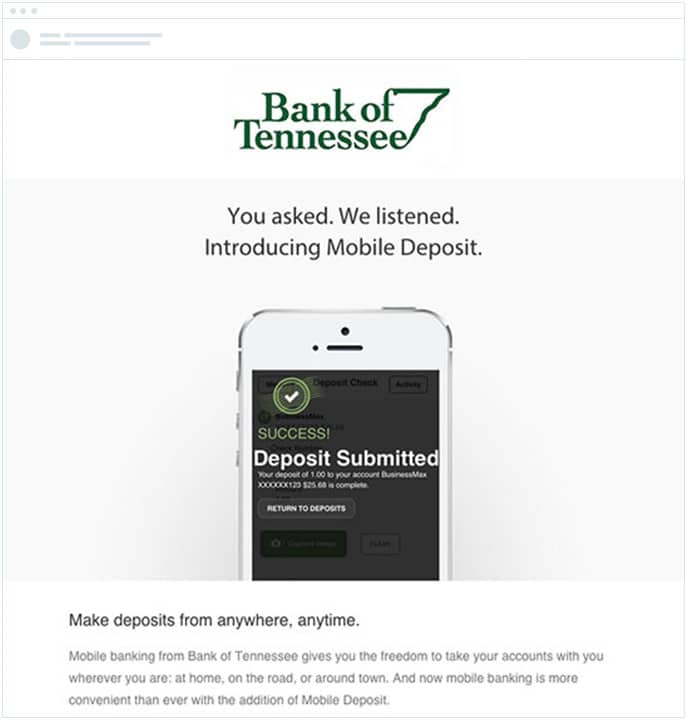

Bank of Tennessee knows this well and uses images effectively in their customer campaigns.

Alongside the copy informing subscribers of the new mobile deposit features, they include beautifully designed visuals that show the feature in action and make it easy for subscribers to understand what the new mobile app can do.

Should you be including visuals in your email campaigns? And what kind of visuals will work best?

Find out by A/B testing. There are a number of things you can test, so, to help you get started, we’ve compiled a few ideas:

Images or no images

While images clearly have a positive impact on Bank of Tennessee’s email above, it might not necessarily be the case for your emails, depending on the design and images chosen.

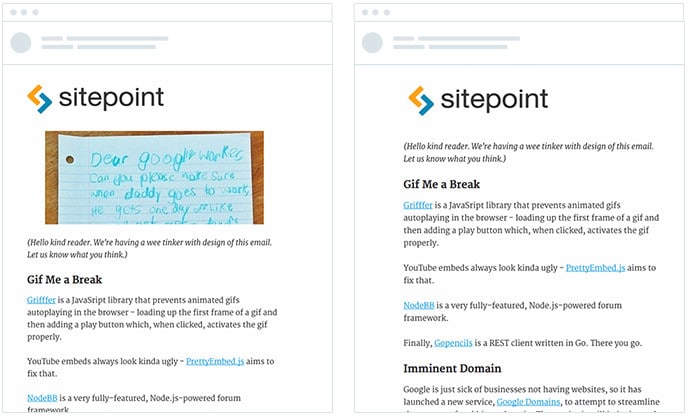

When SitePoint tested images in their newsletter, they actually saw a very slight decrease in conversions, as they found the images distracted people from the content.

While using images may work for some emails, it can detract from others, so discover whether or not they are working for you by A/B testing including them in your campaigns.

Style

There are many different types of visuals you can include in your email campaigns.

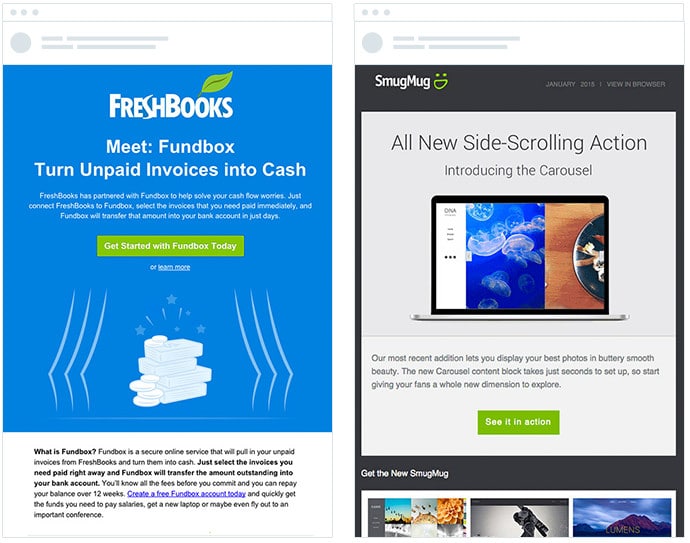

To illustrate, consider the following two examples, both of which are using email to announce new features in their products.

Freshbooks chooses to use a drawing style in their campaigns that closely mimics the visual style seen on their website, while SmugMug opts to show a screenshot of the interface displayed inside a Mac laptop.

Which works best? It completely depends on your brand, audience, and layout of your campaign.

So, next time you are creating an announcement campaign, consider A/B testing the style of images you include to see which works best for your business.

Copy

According to research by Microsoft, smartphones have left humans with such a short attention span that even a goldfish can hold a thought for longer periods of time.

According to the study, the average human attention span has fallen from 12 seconds in 2000—around the time the mobile revolution began—to eight seconds today.

A goldfish is estimated to have a 9-second attention span.

This reduction in attention span has significantly increased the importance of great writing in your emails. If you can’t quickly and easily explain your product or offer, you’ll struggle to get your subscribers to click-through on your campaigns.

You can discover what great copy looks like for your audience by A/B testing different copy in your email campaigns.

There are a number of features in your copy that you can test, so we’ve compiled a few ideas to help get you started:

Length

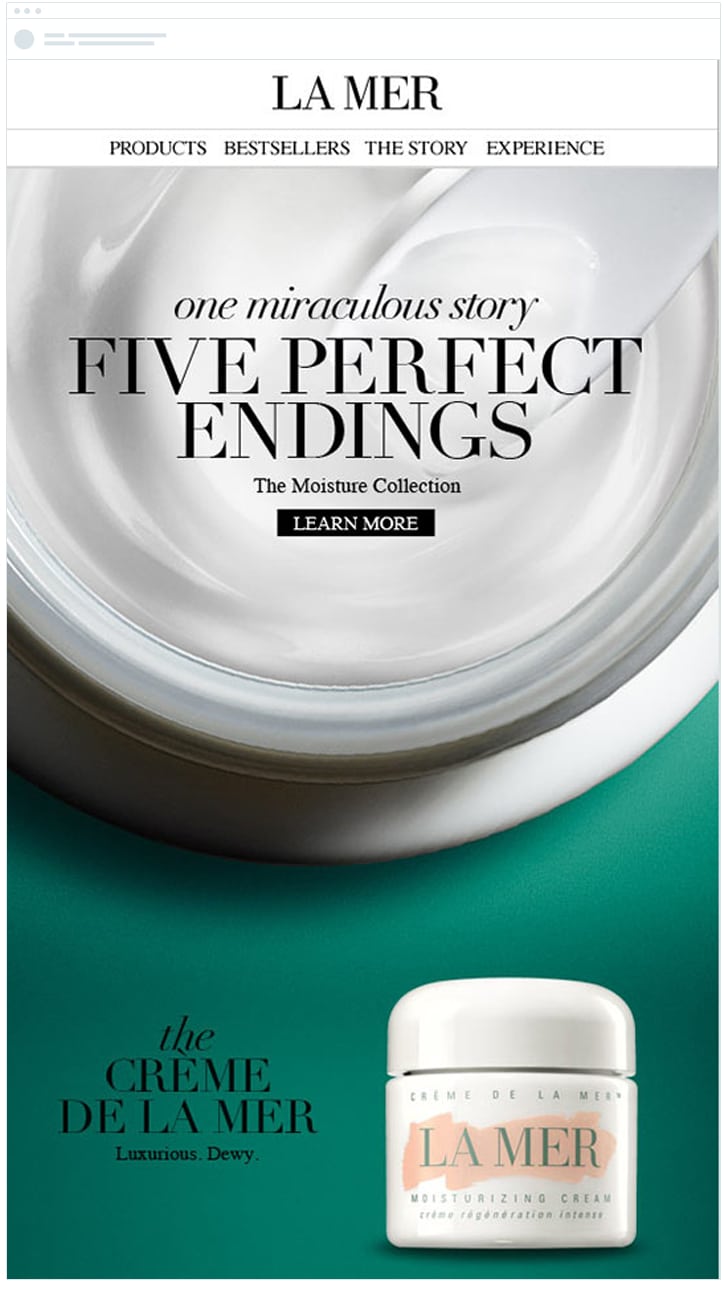

With the mobile revolution driving a decrease in attention spans, there’s a trend in email design towards using shorter copy.

This email from La Mer is a good example. It features minimal copy and, instead, allows the beautiful edge-to-edge imagery to really deliver the message.

Would this work for your brand? Or are you better off including longer-form copy that explains in detail the benefits and features of your product?

The answer is largely going to depend on the design of your email, your audience, and the complexity of the product you are selling. Next time you are creating a new product announcement campaign, consider testing whether short or long-form copy gets more of your subscribers to click-through and make a purchase.

Personalization

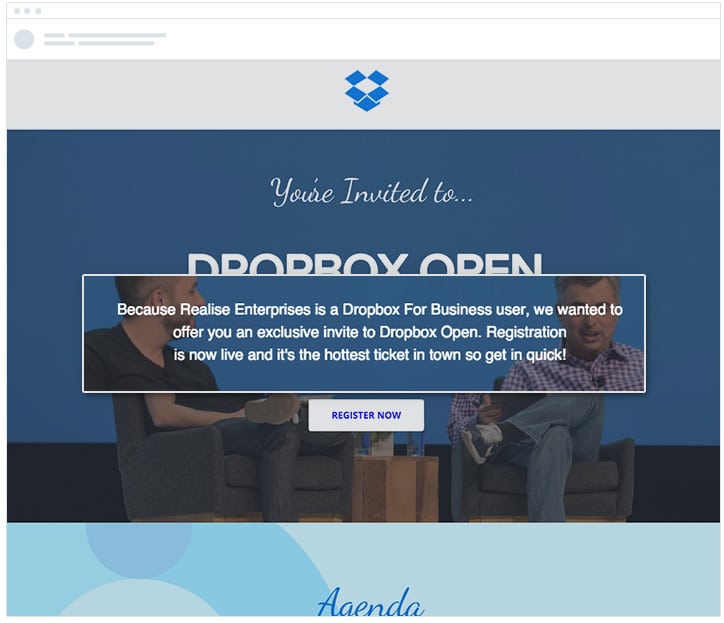

According to studies, including personalization in your email campaigns can increase click-through rates by over 14%.

The team at Dropbox realizes this and leverages it to their advantage, including the subscriber’s company name in the email to make the invite more relevant to the subscriber and increase the chance they’ll attend the event.

If you have extra details on your subscribers stored in your email list (such as their company name, location, or other attributes), consider test personalizing the body copy of your next campaign to see if the increased relevance drives increased results.

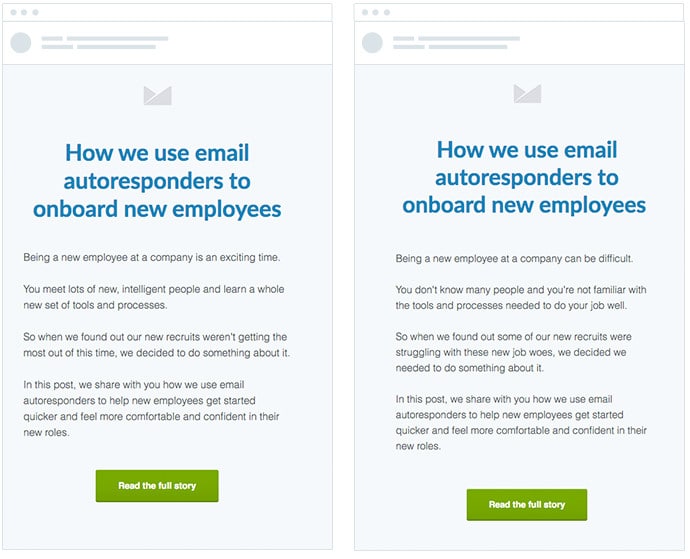

Tone

The tone you use in the body copy of your email campaigns can have a big effect on the number of click-throughs you receive.

Studies show that, when you incorporate positivity into your copy, you engage your reader’s brain in a much more powerful way, enabling them to easily understand your key messages and increasing their motivation to click-through and purchase your product.

We tested this ourselves, and found that using positive language increased our email conversion rate by 22%.

Next time you are writing copy for your email marketing campaigns, give some thought to the tone in which you are writing and consider testing whether a positive tone could outperform a negative tone, in terms of driving click-throughs and purchases.

Calls to action

Your calls to action are one of the most important parts of your email marketing campaigns.

They help increase your email click-through rate by making it clear to readers exactly what the next step is.

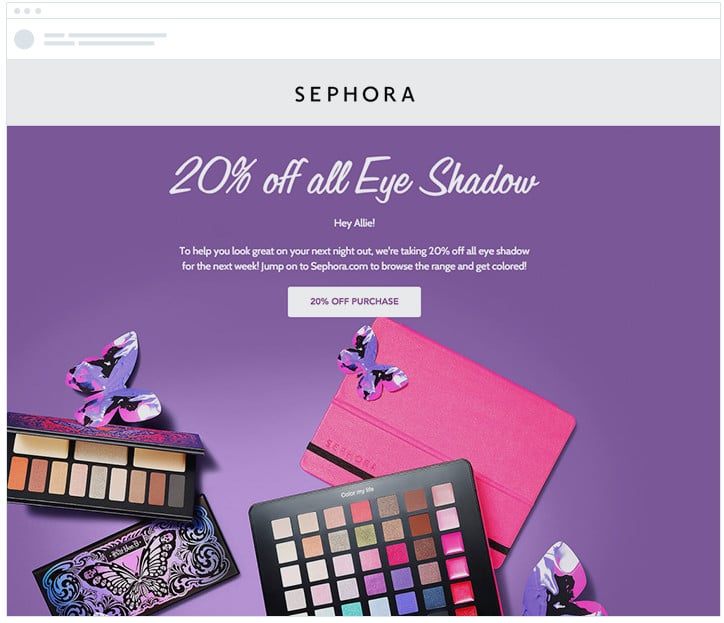

Sephora uses them well in their email campaigns, including a prominent call to action that ensures readers know exactly what they need to do next.

Given the importance of calls to actions in driving click-throughs, it’s a good idea to A/B test them to make sure you’re getting the best results.

Here are a few ideas as to what you can test:

Button vs. text

There are generally two options for creating calls to action in your email campaigns: adding buttons or using simple hypertext links.

In our own testing, we’ve found that using buttons is a better approach, and we were able to get 27% increase in click-throughs by using a button instead of a text link.

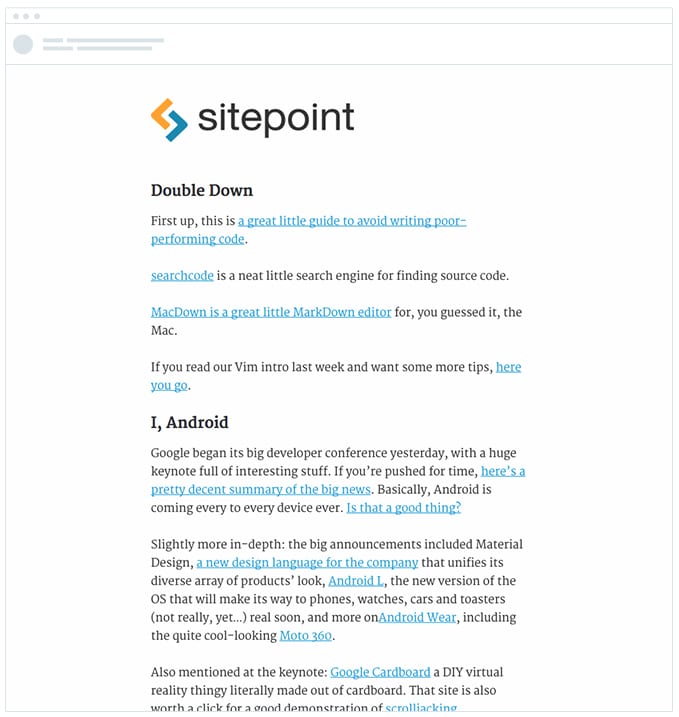

However, this isn’t necessarily applicable for all campaigns. For instance, SitePoint’s newsletter gets an amazing click-through rate from using simple text links.

You can find out which approach is best for your campaigns through A/B testing. Tools like our own email builder allow you to add both text links and buttons to your email campaigns and enable you to easily test which one works best for your unique campaigns and audience.

Button copy

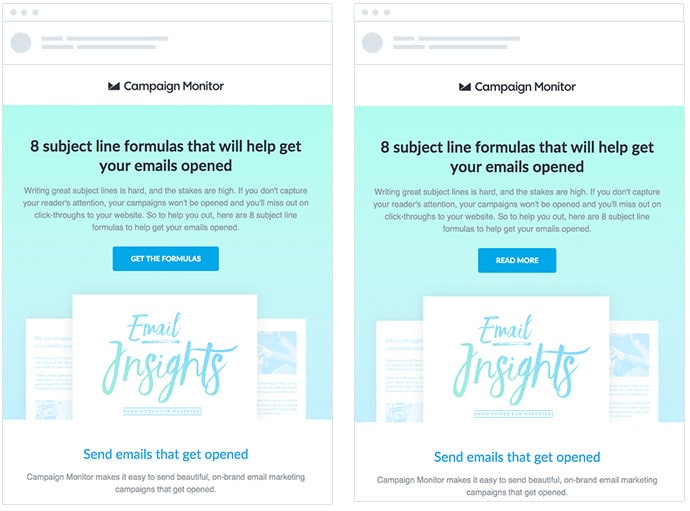

Regardless of whether you choose to use buttons or simple text links, you must also carefully consider the copy you’re using for those buttons and/or text links, as it can have an effect on the number of people who click-through from your campaigns.

In our own testing, we found that using specific, action-oriented copy such as “Get the formulas” was better than using generic copy like “Read more,” improving our email click-through rate by over 10%.

Although specific, action-oriented copy may have worked well for us in the test, that doesn’t necessarily mean it will have the same effect on your campaigns.

For your next campaign, consider testing generic button copy against specific, action-oriented copy to see which works best for your audience.

3 tips for running more effective A/B tests

Tools like Campaign Monitor, with their drag-and-drop email builders, make it really quick and easy to run A/B tests on your email campaigns, making it unnecessary for you to code multiple versions of the email and test them across different devices and email clients—you simply make the changes you want and click send.

However, before you dive in and start setting up A/B tests, there are a few strategic tips you can use to help increase the chances of getting success from your A/B testing.

1. Have a hypothesis

To have the highest chance of getting a positive increase in conversions from your A/B test, you need to have a strategic hypothesis about why a particular variation might perform better than the other.

The best way to do this is to come up with a basic hypothesis for the test before you begin. Here are some examples to help illustrate what a basic hypothesis might look like:

- We believe personalizing the subject line with the subscriber’s first name will help make our campaign stand out in the inbox and increase the chance it will get opened.

- We believe using a button instead of just a text link will make the call to action stand out in the email, drawing the reader’s attention and getting more people to click-through.

These simple statements, even if just said in your mind, help you define what you are going to test and what you hope to achieve from it, and keep your A/B tests focused on things that are going to get results.

2. Prioritize your A/B tests

Between subject lines, button colors, and copy changes, it’s likely that you’ll have a lot of ideas for A/B tests that you want to run.

However, not all A/B test ideas are created equal, and you want to prioritize the ideas that are most likely to get you the best results with the least effort.

To help you do this, you can use the ICE score. Created by influential marketer Sean Ellis, the ICE score is a way to grade your different A/B test ideas and prioritize which ones to run first.

The ICE score has 3 parts:

- Impact: How big of an impact do you think this might have? For instance, is testing a small change in your subject line likely to have as big of an impact as testing the tone of your copy?

- Confidence: How confident are you that this change will have a positive impact? Testing proven tactics like personalizing the subject line are more likely to have a positive impact on conversions than changing the image style in your campaigns.

- Ease: How easy is it to implement this A/B test? For example, testing the word order of your subject line would take less than 30 seconds, whereas testing different image styles requires you to create multiple images with different styling and will generally take longer.

For each A/B test idea you have, quickly (even just in your head) assess it against the 3 elements of the ICE score to help you grade each idea and prioritize which ones you should execute on first.

3. Build on your learnings

Not every A/B test you run is going to result in a positive increase in conversions. Some of your variations will decrease conversions, and many won’t have any noticeable effect at all.

The key to this is to make sure you learn from each A/B test you run and use that knowledge to create better campaigns next time.

SitePoint does a good job of this with “Versioning,” their daily newsletter that goes to over 100,000 subscribers.

For an entire week, they ran different A/B tests on the newsletter, changing the template, testing images, testing fonts, etc.

Not every test they ran resulted in a positive increase in conversions (for instance, adding images to the email actually decreased conversions) but, with every test, they learned more about what works and what doesn’t work for their audience. This helped them to come up with a new email design that generated a 32% increase in conversions.

3 Email testing tools you should be using

Now that you know why testing your emails is important and which elements to test, let’s briefly take a look at 3 tools that can help you create the perfect email.

1. CoSchedule Headline Analyzer

Although CoSchedule built the Headline Analyzer to help analyze blog post headlines, this nifty tool also works well as a subject line tester for coming up with catchy email subject lines. It helps you balance the words in your headline so you can create one that really grabs attention and drives readers to open your email.

2. Sender Score

If your emails don’t reach your subscribers’ inboxes, it doesn’t matter how good your email headline and copy are.

That’s why a tool like Sender Score is very handy.

Sender Score allows you to check your sender reputation, a factor that affects email deliverability. If your emails are not reaching the intended inboxes, it affects your A/B testing results and, ultimately, your entire marketing campaign.

3. Campaign Monitor’s analytics suite

When it comes to ensuring that your emails get opened, read, and the CTA clicked, Campaign Monitor’s analytics suite can help you get better results.

You can easily A/B test your emails with the analytics suite by creating two versions of your email, and Campaign Monitor will send them out to your two test subscriber lists.

This tool will show you which variation of your email performs better and automatically send the winning email to the rest of your list.

Wrap up

Get started A/B testing your email campaigns today. Create a hypothesis for how you could improve your campaign, set it up as an A/B test, click send, and see what happens. You may see an increase in opens or click-throughs but, if you don’t, you’ll learn something about your audience that can help you create better campaigns in the future.

Here’s what to remember about A/B split testing to optimize your email campaigns:

- Create a hypothesis for how you could improve your campaign

- Test all critical elements of your email

- Use the right tools to improve the impact of your email

- Prioritize your A/B testing

If this article has piqued your interest to learn more about testing and optimizing elements of your email and strategy, check out our post on email testing tips.

Easily build sign-up forms that convert

Our easy-to-use tools help you build landing pages and sign-up forms to grow your lists.

Learn More

Case Study

This nonprofit uses advanced tools and automation to create more emails—and more time.

Learn how

The email platform for agencies

We started out helping agencies with email, so let us help you.